![PDF] The kappa statistic in reliability studies: use, interpretation, and sample size requirements. | Semantic Scholar PDF] The kappa statistic in reliability studies: use, interpretation, and sample size requirements. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/6d3768fde2a9dbf78644f0a817d4470c836e60b7/3-Table1-1.png)

PDF] The kappa statistic in reliability studies: use, interpretation, and sample size requirements. | Semantic Scholar

Systematic literature reviews in software engineering—enhancement of the study selection process using Cohen's Kappa statistic - ScienceDirect

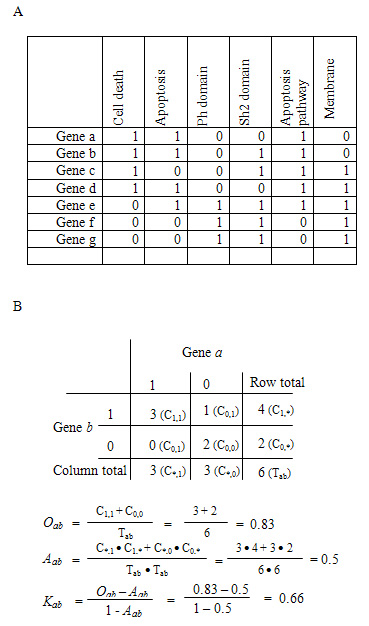

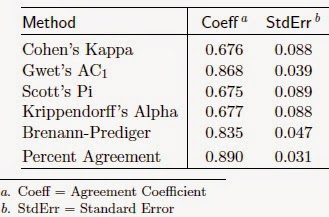

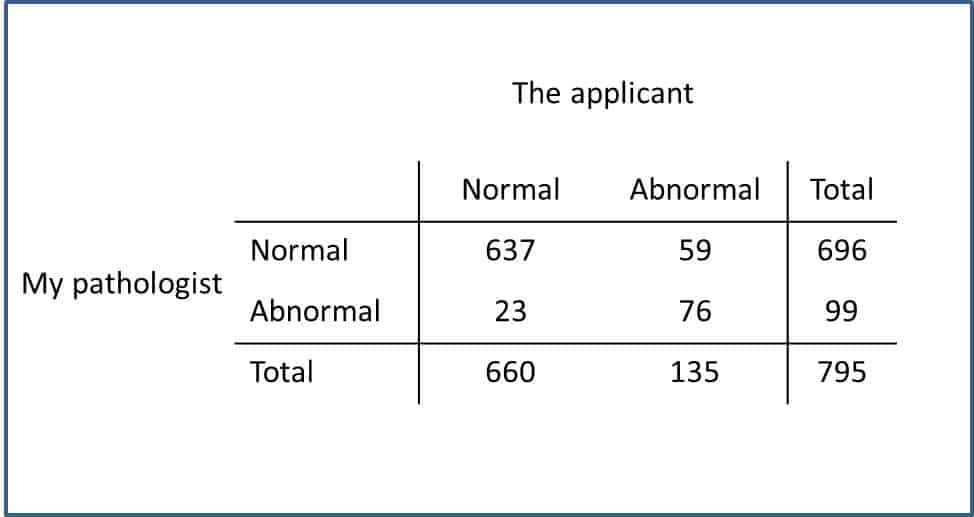

3. Measurement and interpretation of the Kappa coefficient. Table 1. Degree of Agreement Between Two Reviewers. Reviewer 2 rejec

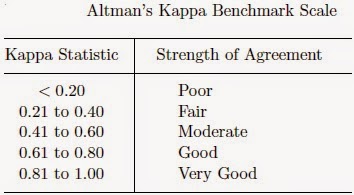

K. Gwet's Inter-Rater Reliability Blog : Benchmarking Agreement CoefficientsInter-rater reliability: Cohen kappa, Gwet AC1/AC2, Krippendorff Alpha

![Kappa Statistics and Strength of Agreement [44]. | Download Scientific Diagram Kappa Statistics and Strength of Agreement [44]. | Download Scientific Diagram](https://www.researchgate.net/publication/340998576/figure/tbl2/AS:888538270797824@1588855440209/Kappa-Statistics-and-Strength-of-Agreement-44.png)